MIT MAD Fellow Improves Accessibility of Online Graphics for Blind Users

Jul 18, 2023

In “Data Refusal From Below: A Framework for Understanding, Evaluating, and Envisioning Refusal as Design,” 2022 Design Fellow Jonathan Zong and co-author J. Nathan Matias, examine how the notion of refusal can open new avenues in the field of data ethics. Respectively from the MIT Visualization Group and Cornell Citizens and Technology Lab, the pair proposes a framework in four dimensions to map how activists and everyday users say “no” to technology misuses. At the same time, the researchers argue that just like design, refusal is generative, and has the potential to create alternate futures.

By Adelaide Zollinger

Jan 3, 2024

Jonathan Zong

Photo: Adelaide Zollinger

Answers by Jonathan Zong

A: Refusal was developed in feminist and Indigenous studies. It’s this idea of saying “no,” without being given permission to say “no.” Scholars like Ruha Benjamin write about refusal in the context of surveillance, race, and bioethics, and talk about it as a necessary counterpart to consent. Others, like the authors of the Feminist Data Manifest-No, think of refusal as something that can help us commit to building better futures.

Benjamin illustrates cases where the choice to refuse is not equally possible for everyone, citing examples involving genetic data and refugee screenings in the UK.

The imbalance of power in these situations underscores the broader concept of refusal, extending beyond rejecting specific options to challenging the entire set of choices presented.

A: In my work on data ethics, I’ve been thinking about how to incorporate processes into research data collection, particularly around consent and opt-out, with a focus on individual autonomy and the idea of giving people choices about the way that their data is used. But when it comes to data privacy, simply making choices available is not enough. Choices can be unequally available, or create no-win situations where all options are bad. This led me to the concept of refusal: questioning the authority of data collectors and challenging their legitimacy.

The key idea of my work is that refusal is an act of design. I think of refusal as deliberate actions to redesign our socio-technical landscape by exerting some sort of influence. Like design, refusal is generative. Like design, it's oriented towards creating alternate possibilities and alternate futures.

Design is a process of exploring or traversing a space of possibility. Applying a design framework to cases of refusal drawn from scholarly and journalistic sources allowed me to establish a common language for talking about refusal and to imagine refusals that haven’t been explored yet.

A: The use of data for facial recognition surveillance in the U.S. is a big example we use in the paper.

When people do everyday things like post on social media or walk past cameras in public spaces, they might be contributing their data to training facial recognition systems.

For instance, a tech company may take photos from a social media site and build facial recognition that they then sell to the government. In the U.S., these systems are disproportionately used by police to surveil communities of color. It is difficult to apply concepts like consent and opt out of these processes, because they happen over time and involve multiple kinds of institutions. It’s also not clear that individual opt out would do anything to change the overall situation. Refusal then becomes a crucial avenue, at both individual and community levels, to think more broadly of how affected people still exert some kind of voice or agency, without necessarily having an official channel to do so.

A: People who are affected by technologies are not always included in the design process for those technologies. Refusal then becomes a meaningful expression of values and priorities for those who were not part of the early design conversations. Actions taken against technologies like face surveillance — be it legal battles against companies, advocacy for stricter regulations, or even direct action like disabling security cameras — may not fit the conventional notion of participating in a design process. And yet, these are the actions available to refusers who may be excluded from other forms of participation.

I’m particularly inspired by the movement around Indigenous data sovereignty. Organizations like the First Nations Information Governance Centre work towards prioritizing Indigenous communities' perspectives in data collection, and refuse inadequate representation in official health data from the Canadian government.

I think this is a movement that exemplifies the potential of refusal, not only as a way to reject what’s being offered, but also as a means to propose a constructive alternative, very much like design. Refusal is not merely a negation, but a pathway to different futures.

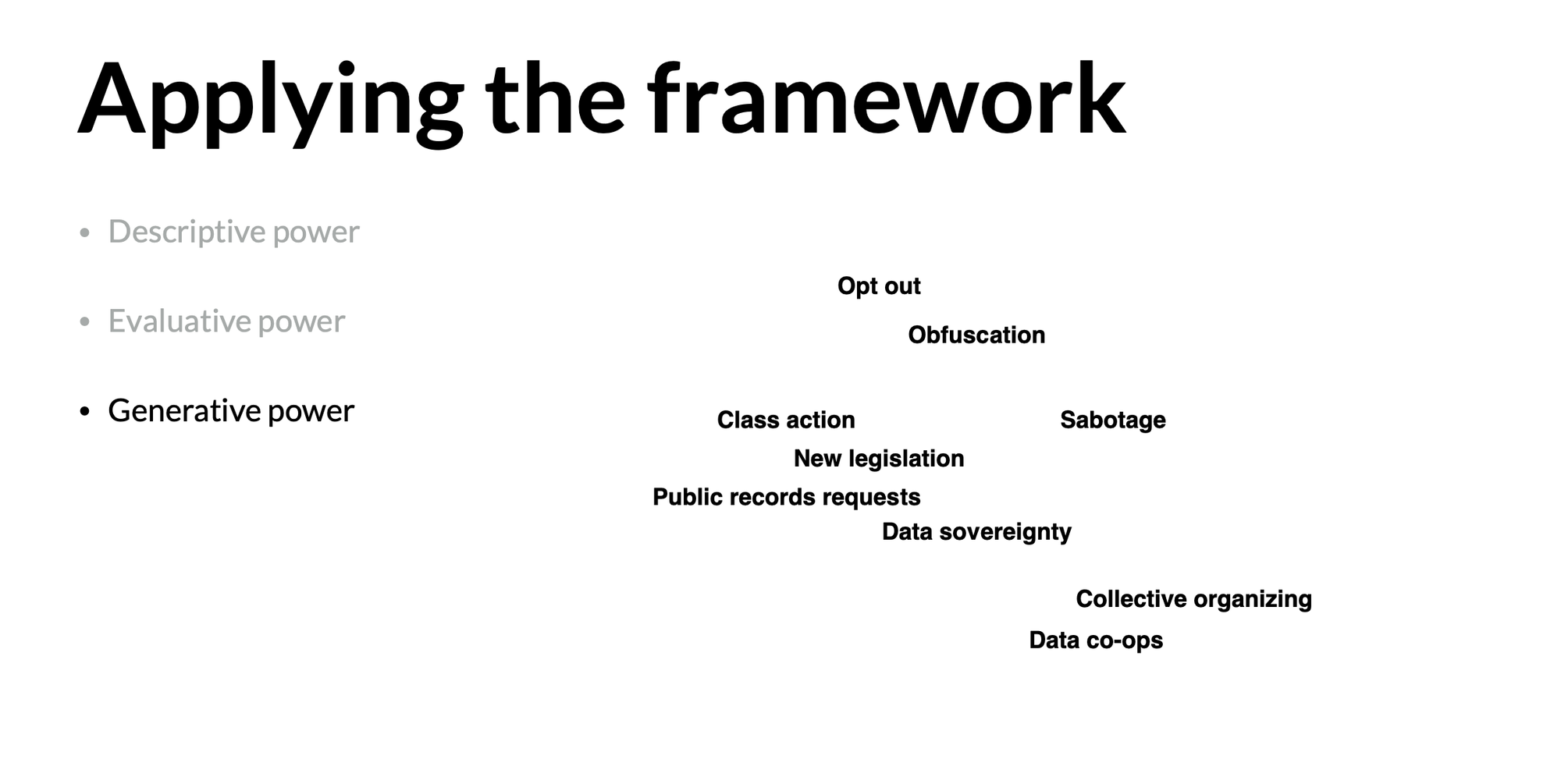

A: Refusals vary widely across contexts and scales. Developing a framework for refusal is about helping people see actions that are seemingly very different as instances of the same broader idea. Our framework consists of four facets: autonomy, time, power, and cost.

Consider the case of IBM creating a facial recognition dataset using people's photos without consent. We saw multiple forms of refusal emerge in response. IBM allowed individuals to opt out by withdrawing their photos. People collectively refused by creating a class-action lawsuit against IBM. Around the same time, many U.S. cities started passing local legislation banning the government use of facial recognition. Evaluating these cases through the framework highlights commonalities and differences.

The framework highlights varied approaches to autonomy, like individual opt out and collective action.

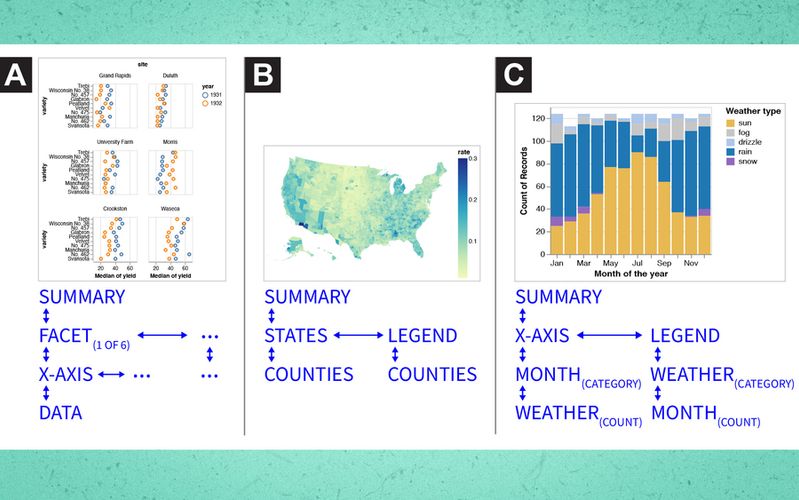

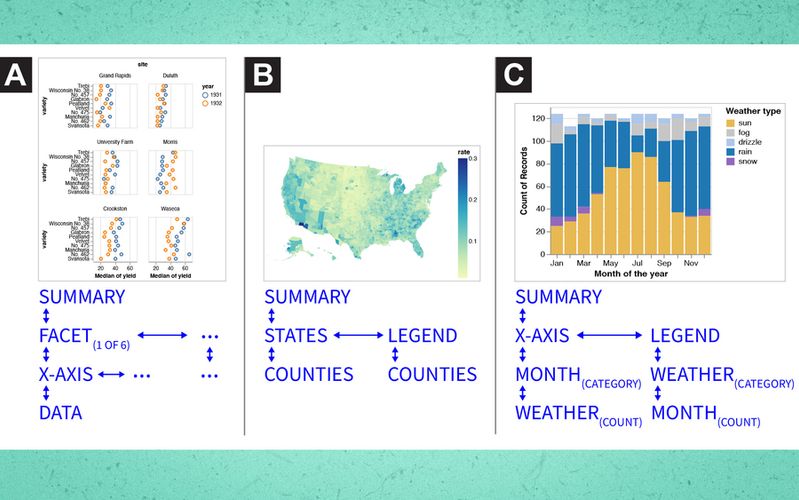

Application example of Zong's and Matias' framework.

Image courtesy of the researchers

Regarding time, opt outs and lawsuits react to past harm, while legislation might proactively prevent future harm. Power dynamics differ; withdrawing individual photos minimally influences IBM, while legislation could potentially cause longer term change. And as for cost, individual opt out seems less demanding, while other approaches require more time and effort, balanced against potential benefits.

The framework facilitates case description and comparison across these dimensions. I think its generative nature encourages exploration of novel forms of refusal as well. By identifying the characteristics we want to see in future refusal strategies — collective, proactive, powerful, low-cost… — we can aspire to shape future approaches and change the behavior of data collectors. We may not always be able to combine all these criteria, but the framework provides a means to articulate our aspirational goals in this context.

A: I hope to expand the notion of who can participate in design, and whose actions are seen as legitimate expressions of design input. I think a lot of work so far in the conversation around data ethics prioritizes the perspective of computer scientists who are trying to design better systems, at the expense of the perspective of people for whom the systems are not currently working.

So, I hope designers and computer scientists can embrace the concept of refusal as a legitimate form of design, and a source of inspiration. There's a vital conversation happening, one that should influence the design of future systems, even if expressed through unconventional means.

One of the things I want to underscore in the paper is that design extends beyond software. Taking a socio-technical perspective, the act of designing encompasses software, institutions, relationships, and governance structures surrounding data use. I want people who aren’t software engineers, like policymakers or activists, to view themselves as integral to the technology design process.

Jul 18, 2023

Dec 6, 2022

Jul 25, 2023